What's all this about an AI bubble?

Your weekly dose of AI & startup news on our path to 1000 Aussie startups.

🇦🇺 Featured: What’s this about an AI Bubble?

Concerned? Same.

If there is an AI bubble, the first sign won’t be charts or think pieces. It will be VCs quietly ghosting you (jokes, they’re doing that already).

What actually happens in a pop scenario:

Funding gets stingier, not dead.

Side projects and “vibe apps” struggle first.

API/model prices drift up once the big labs stop lighting money on fire for growth.

It rhymes with the dot-com wobble: the teams that stayed lean, shipped real stuff, and kept talking to actual customers mostly survived.

There is a good roundup of bubble anxiety from the Replit and Credo AI founders here:

The spicier long-term issue might be energy. Big models eat power. Data centres already suck up a noticeable chunk of global electricity, and AI is a growing slice of that pie. The IEA has a nice sober overview of how AI is juicing demand and what that means this decade:

Short version: if you build things people genuinely use and keep your burn under control, you’ll probably be fine. Alright, onto this week.

🗞️ This Week’s Line-Up

🤔 AI Introspection - Is it possible?

🪡 #️⃣ Needle In The Hashtag Recap

🛠️ Hot Tools Freshly Baked 🛠️.

👊 Welcome the new competitor - Kimi K2 Thinking (New Tool!!)

🎧 Podcast Pick - Is there an A.I Bubble? And what if it pops?

🔥🔥🔥 Events not to be missed 🔥🔥🔥

💼 Jobs on demand

🦘Memes of the Week

🪞 Do AIs learn to look inward when they have enough paramaters?

Two new papers just took a swing at the “can models introspect?” question.

“Looking Inward: Language Models Can Learn About Themselves by Introspection”

They fine-tune models to predict their own behaviour and find that a model can sometimes predict itself better than a stronger model can. Almost like it has a tiny internal mirror:

“Privileged Self-Access Matters for Introspection in AI”

They argue that when you test models on things like “what temperature are you running at,” they basically get hypnotised by your prompt. Ask for something “totally unhinged” and they confidently report a high temp even when that is just wrong.

The vibe right now:

Models can sometimes predict their own behaviour better than an external observer.

That is not the same thing as “having an inner life.”

They are still extremely good at confidently bullshitting about themselves when the prompt nudges them to.

So for now, your LLM “inner monologue” is still closer to “trust me bro”

🪡 #️⃣ Ongoing: Needle In The Hashtag

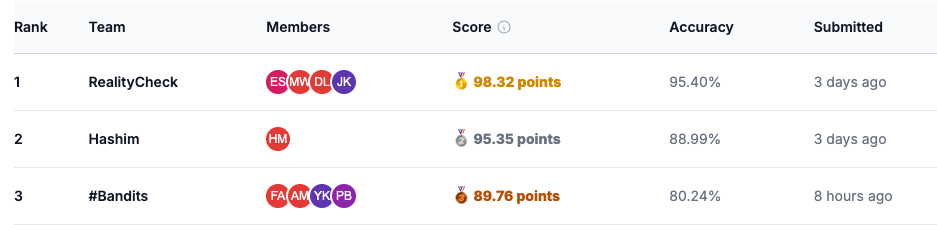

The Needle in the Hashtag Hackathon absolutely lit up Melbourne over the weekend and the momentum is still rolling. More than 150 participants across 30+ teams spent two days diving into the internet’s strangest, darkest corners, guided by experts from the eSafety Commissioner, the University of Melbourne, and speakers like Macken Murphy & David Gilmore. Teams built models to classify harmful personas, map online behaviour patterns, and prototype real interventions for real people caught in algorithmic rabbit holes.

So far the strongest result anyone’s achieved is a wild 95 percent accuracy at identifying who’s who inside our synthetic toxic social network. A reminder to all teams: submissions are due by midnight Friday, no exceptions. Keep pushing. Pitch Day hits on 11 December and it’s going to be massive.

🍞 Hot Tools, Freshly Baked

Short, opinionated rundown. Use at your own risk.

Ideanote - Idea funnel for teams. Capture, prioritise, and move from “shower thought” to “actually shipped feature” with workflows and tracking.

Three Sigma - Feed it the horrible document, it eats the horrible document, you get normal-human answers. Great for policies, contracts, and other PDFs that hate you.

Riverside - Studio-quality audio and video recordings in your browser. If you are still recording podcasts on Zoom, I am judging you.

Guru - Knowledge base that actually shows up where you work instead of rotting in Confluence. Surfaces answers inside Slack, Chrome, and friends.

Murf - Text-to-speech with voices that no longer sound like your GPS from 2011. Good for product videos, training material, and bootstrapped podcasts.

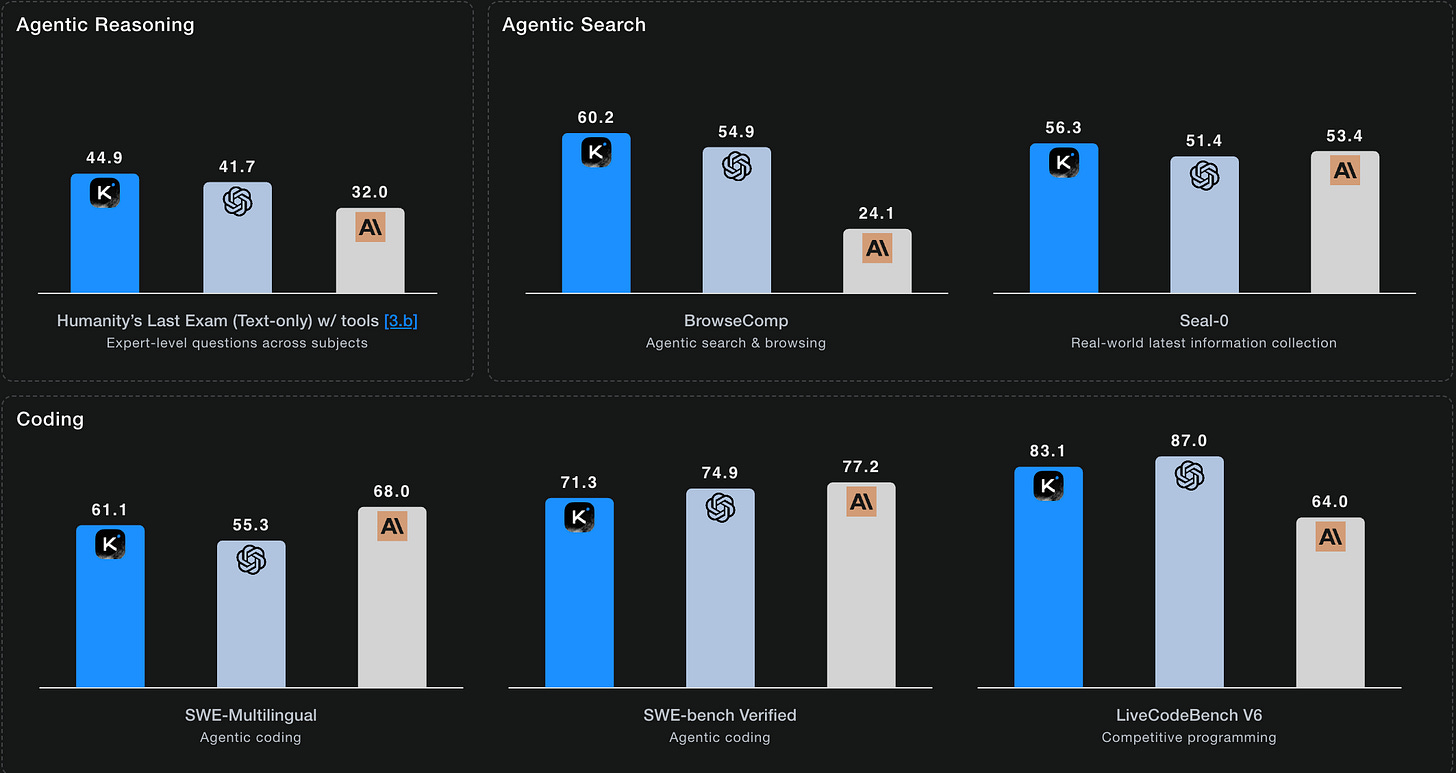

👊 For Hardcore Roos: Kimi K2 Thinking

Meet Kimi K2, the open-weight kid who showed up to the exam with a 1-trillion-parameter Mixture-of-Experts brain and then aced everything.

Rough specs:

MoE with about 1 trillion total params, ~32B active at inference

Trained on 15.5T tokens with a new MuonClip optimizer to keep training stable

Open weights, strong on coding, agentic tasks, tools, math, and general reasoning

Highlights from the report:

Top-tier results on SWE-bench Verified, LiveCodeBench, OJBench, MultiPL-E

Strong on tool-use benchmarks like Tau2-Bench and ACEBench

Genuinely competitive on math and STEM benchmarks like AIME 2025 and GPQA

Surprisingly good open-ended writing, hanging out near GPT-4.1 and Claude Sonnet in some evals

You actually get to poke it, not just worship the leaderboard slide:

Overview and model family:

Kimi K2 - Open Agentic IntelligenceFull research paper:

Kimi K2: Open Agentic Intelligence (arXiv)

If you have been looking for a serious open-weight model to standardise on for coding and tool-calling, K2 is very much in the “you should at least benchmark me” bucket.

🎧 Podcast Picks

The Daily - “Is There an A.I. Bubble? And What if It Pops?”

Cade Metz walks through why tech giants are willing to torch trillions on data centers, why everyone keeps saying “AGI” with a straight face, and how all of this smells a bit like the dot-com era in both upside and risk.

📅 Upcoming Events

Use AI To Hack Your Way To Page #1 Of Google

🗓 Dec 6th 2025 | ⏰ 10:00 AM – 2:00 PM | 📍 Stone & Chalk

Want your startup on page one of Google without spending months guessing what to write? We’ll give you code for AI agent will research, write and publish, and capture spot #1 on Google search while you sleep. This event is EXPENSIVE ($100/ticket) because we’ll actually give you code and agents that we spent months working on. If you’re a struggling founder/student write me an email (sam@mlai.au) with why you need hella discounts and I’ll give a scholarship to a few peeps.

Build Your AI Powered Lead Automations With Us (In 4 Hours, No Tech Skills or Big Budgets Need)

🗓 Dec 13th 2025 | ⏰ 10:30 AM – 2:30 PM | 📍 Stone & Chalk

If you’ve been wanting to actually build real #automations for your business instead of just watching AI demos, this session is for you.

Over 4 hours, we’ll guide founders, small teams, and creators through building one complete AI powered lead-gen or sales automation that works in the real world.

No technical background needed! No complicated setup. You’ll walk out with a system that can capture, qualify, and route leads for you every day.

MLAI December Social

🗓 Dec 16th 2025 | ⏰ 6:00 PM – 8:00 PM | 📍 Boatbuilder’s Yard

The MLAI December Social is the Machine Learning & AI Meetup’s no-talks, no-slides, pure-vibes end-of-year meetup where the whole community hangs out over dinner and drinks at The Boatbuilders Yard in Southbank. If you want to meet Melbourne’s ML/AI crowd without the formalities, this is the one to come to.

MYMI x MLAI: MedHack Part II

🗓 21st–22nd February 2026

Australia’s most chaotic health-tech hackathon. Team up with Hackers, Hustlers, Hipsters & Healers to solve real medical challenges and push digital health beyond buzzwords.

👉 Early Bird tickets here.

💼 Jobs on demand (This Week)

1. Developers - Lyra (Flexible)

Lyra is looking for talented (cracked) engineers to join their Melbourne team. What they want:

- 2 years coding experience (personal projects count)

- Excitement for fast-paced startup life

- Good communication skills (English)

- Ambition & passion

👉 If you’re keen to apply, do it here:

2. Senior Product Data Engineer – Fair Supply

Fair Supply is hiring a senior data engineer to help build ESG and supply-chain risk products that actually uncover real issues like modern slavery and carbon impact. Melbourne hybrid team, Series A backed, lots of ownership across data models, pipelines and product features.

👉 If you’re keen to apply, do it here:

3. Summer Work Experience – Krish Agrawal

Krish is looking for an onsite summer placement for at least 40 days. If your team needs a motivated extra set of hands, he’s keen to chat.

👉 If you’re open to offering something, message him in the #jobs channel.

4. Donor and Community Manager – Alex Makes Meals (Melbourne)

A warm, organised communicator to nurture donors, write human-first outreach, run newsletters, improve supporter journeys, and help scale a fast-growing charity. Hybrid, 16–32 hours per week, salary negotiable.

👉 If you’re keen to apply, email alex@alexmakesmeals.com

https://open.substack.com/pub/evolvingtheory/p/the-ai-bubble-isnt-the-dot-com-bubble-b0d?utm_source=share&utm_medium=android&r=275w0u