Yeah... That looks about done

Your weekly dose of AI & startup news on our path to 1,000 Aussie startups.

It’s 2am. Your agent has written a bunch of code, touched half the repo… and the app still doesn’t run.

You groggily type into the chat, ‘Try again. MAKE. NO. MISTAKES’; the LLM equivalent of a cocaine bump and a 1:1 with a motivational speaker.

Yet low and behold… it still doesn’t work. The model either concludes that it’s finished too quickly, or goes down a hyper-fixation rabbit hole.

So why do we keep expecting an LLM to one-shot an entire app? Why are we surprised when it loses track of what it already built?

Here’s the thing Anthropic is pointing out: Long-running agents don’t have persistent memory. Each new session starts fresh. Their analogy is simple. It’s like a team of engineers working in shifts, except every new engineer shows up with no memory of what the last one did. So the problem isn’t just intelligence. It’s continuity.

Wait, before you read more, join our Slack for more updates! [CLICK HERE]

This Week’s Line-Up

🔍 AI Harness Engineering

🚪 Be Better Than Your Agent

🤖 Techies Weekly Update

📼 Weekly Videos

🔮 Coming Next Week: Industry opportunities for Australian startups

🦘 Memes of the Week

Written by: Jun Kai Chang (Luc), Julia Ponder, Dr Sam Donegan, Shivang Shekhar

🔍 AI Harness Engineering

You’ve probably seen this.

Agent writes a bunch of code. Looks decent. You run it… and something basic is broken. Or worse, half the app works and the other half is quietly on fire.

And the agent?

“Feature complete.”

Sure mate.

So here’s the real problem Anthropic is pointing at:

Agents don’t remember anything between sessions.

Every new run is like a fresh engineer showing up with zero context.

Which means if you’re not leaving a clear trail behind… they start guessing. And once they start guessing, things go downhill fast.

How to build a harness for an AI agent

1) Set up the project properly (once)

Anthropic uses an initializer agent whose whole job is to set the project up so future runs don’t start blind.

It should:

create an

init.shscript so the app can always be started the same waycreate a

progress.txtfile that logs what’s been donemake an initial git commit

generate a structured feature list (JSON is better) from the original prompt

That feature list is basically: what does “done” actually mean for this app?

If you skip this or half-do it, every session after this is guessing what the system is supposed to do. And guessing is where things go to die.

2) Turn the problem into actual features

Agents either try to do everything at once, or they randomly decide they’re finished.

Neither is helpful.

So force the task into a feature list where:

each feature is clearly defined

each feature can be tested end-to-end

everything starts as failing

In Anthropic’s example, they ended up with 200+ features for a claude-style app.

Which sounds excessive until you realise… yeah, that’s probably what it actually takes.

3) One feature at a time. No exceptions.

After the initial setup, every session uses a coding agent. And it follows a very boring loop:

read the progress file

read recent git history

read the feature list

pick ONE unfinished feature

implement it

update progress

commit

That’s it.

No “while I’m here I’ll fix three other things”. No wandering off.

If your agent is touching multiple features in one go, you’re basically rolling the dice on whether the app still works afterwards.

4) Every session starts by checking reality

Before writing any new code, the agent needs to figure out what state the world is in.

Anthropic literally has it:

check the working directory

read git logs and progress

read the feature list

restart the app using

init.shrun a basic end-to-end check

Because here’s the thing. The app might already be broken from the last run.

If you don’t check that first, you just stack new bugs on top of old ones and it gets messy fast.

5) If it’s not tested, it’s not done

Agents are very confident liars. #confidentlyincorrect

Anthropic found one of the biggest failure modes was the model marking features as complete without actually testing them properly.

So they force verification:

don’t mark a feature as passing until it’s tested

prefer end-to-end testing over just reading code

for web apps, use browser automation so it behaves like a real user

This was a big unlock. Once the agent actually used the app, it started catching its own mistakes.

Wild.

The shift

This is about setting up a system where:

each session leaves useful state behind

the next session can pick up without guessing

work is broken into small, testable pieces

nothing gets marked done unless it actually works

You’re not managing one smart agent. You’re managing a series of short-term workers passing notes to each other through your repo. If those notes are clean, things compound. If they’re not… enjoy debugging whatever the last guy “definitely finished.”

Read more about the article by Anthropic: here

🚪 Be Better Than Your Agents

You really want to let Agents be Smarter Than You? Control the agents… not the other way. Pick up the skills below 👇

HOW THE F**K DO I USE OPENCLAW?

2 April | 5:30pm-9.30pm | Stone & Chalk, Melbourne

Still not sure how to actually use OpenClaw without wasting hours? Come and you will get the following:

8 live demos of real use cases

Vincent Koc (OpenClaw Maintainer) answering your burning questions

David (AI risk expert) on risks + how to not break things

Bonus: win a Raspberry Pi whatttt???

Sponsored by: ACASE & Michael Brown

If you’re building with agents, this is a solid shortcut.

buildDay: Build Your First AI App in a Day

Saturday 4 April | 8:30am–3:00pm | Stone & Chalk, Melbourne

12 spots only.

Spend the day building and deploying a real AI app. No coding experience needed. You leave with something live.

Build an AI outreach generator (email + LinkedIn)

Use Cursor, Supabase, OpenRouter

Ship it via GitHub + Vercel

No lectures. Everyone builds together. No one gets left behind.

Includes lunch, coffee, templates, and support after.

Walk out with a live app and a proper understanding of how this actually works.

Register: Book your Spot Here!

MLAI x StartSpace Co-working Day

Saturday 4 April | 10:00am–2:00pm | State Library Victoria

Build day, but chill.

Show what you’re working on (optional demos, but do it)

Get feedback from other builders

Or just sit down and actually ship something for once

Starts with intros, then demos, then co-working. Group lunch in the middle.

Hosted at StartSpace inside the State Library. Good space, good people, zero fluff.

Come at 10am if you want the full experience. Or drift in when you’re ready.

Register: Come build together!

AI Engineer Night Melbourne

Thursday 9 April | 6pm–9pm | Stone & Chalk Melbourne

AI Engineer Nights will bring a taste of the AI Engineer conference to Sydney and Melbourne in April. Get sneak peeks at conference talks under development. Have a drink and a bite to eat on us and maybe come away with a free ticket to the conference.

Register here: For the big brain tech people

AI Engineer Unconference

Saturday 11th April | 10am–4pm | Stone & Chalk Melbourne

AI Engineer unconferences will explore the key ideas associated with the AI Engineer Conference series. From the impact of AI on the software development process and profession to the opportunities emerging with AI for new products and services. Whether it’s agents like OpenClaw or Enterprise Workloads, you set the agenda.

AI Engineer Unconferences are free; just RSVP so we can plan best for the day.

RSVP here: For the big brain tech people

Claude for Everyone

Thursday 16 April | 5:30pm–9:30pm | Melbourne (location on registration)

This one sadly is already full.

Run by the Claude community crew. If you’re building with Claude or curious, this is your crowd.

real use cases

builders, not spectators

slightly chaotic, very useful

Join the waitlist and hope someone drops.

Join waitlist: Give ME A SPOT PLEASEE!

Codex Community Meetup – Melbourne

Thursday 30 April | 5:30pm–8:30pm | Stone & Chalk, Melbourne

This will sell out fast (110 attendees already!)

All things Codex. If you care about coding agents, you should be in the room.

latest updates + live demos

community builders showing what they’ve shipped

chance to demo your own project (apply when registering)

Loose format:

networking

updates (OpenAI team if available)

demos

hang + chats

Good place to see what people are actually building right now.

Register: Codex Community Assemble

🚪 Free co-working space for MLAI peeps

Being a founder is LONELY!

So why not join us and be lonely together?

We now have 24 crisp new seats on offer for anybody that is working on their own startup and giving back to the community. Interested? Join our slack and post in the #cowork-and-chill channel to book a spot for the day.

🎨 AI and ART? Wait… AI, Art and FEELINGS?

MLAI is supporting our pal Jess Leondiou to help build The Shape of Thoughts, an immersive exhibition where art and psychology meet.

We’re looking for developers to help bring some of the interactive art pieces to life!

If you’d like to apply your skills to a live, public work, we’d love to hear from you!

(Run as a not-for-profit, with Roo points awarded.)

👉 Check out more here

🤖 AI Bits for Techies

This week will be all about the new research from Anthropic that identifies the ‘context ceiling’, the point where long running agents stall during complex builds and introduces the ‘initializer coder’ framework to bridge the gap between sessions.

View the Techies Report: Click Here

📼 Weekly Videos

Length: 3.03 mins

About: Can you ship a million lines of software without writing any code? OpenAI just did. Over five months, an internal product grew to ~1M lines, all generated by AI agents. Estimated build time: roughly 1/10th of a traditional approach. That’s the headline. The more interesting part is how they did it.

Length: 15:16 mins

About: The video explores a major shift in the AI industry moving into 2026. Instead of focusing solely on better prompts or bigger models, the industry is pivoting toward Harness Engineering - the infrastructure that makes AI models actually useful and reliable in the real world.

Length: 20:30 mins

About: It seems pretty well-accepted that AI coding tools struggle with real production codebases. At AI Engineer 2025 in June, The Stanford study on AI's impact on developer productivity found that a lot of the ‘extra code’ shipped by AI tools ends up just reworking the slop that was shipped last week.

🔮 Coming Next Week: Industry opportunities for Australian startups

Next week, we’re breaking down where the real opportunities are in Australia right now. The hype cycle is over. 2026 is about what actually makes money.

A few sectors are quietly pulling ahead:

Applied AI

Not a category. A layer across everything.

Climate & CleanTech

Net Zero is turning into real deals, not just decks.

HealthTech

A strained system = very real gaps to build into.

Cybersecurity

AI attacks are getting better. Most defences… aren’t.

If you’re building (or thinking about it), this one matters. We’ll go deep next week.

🚀 Got a Tech you’d like us to write about? Drop it in #general in Slack or email us at marketing@mlai.au

🦘 AI & Startup Memes

By Ty Coon: I love the smell of a new project on a Sunday:

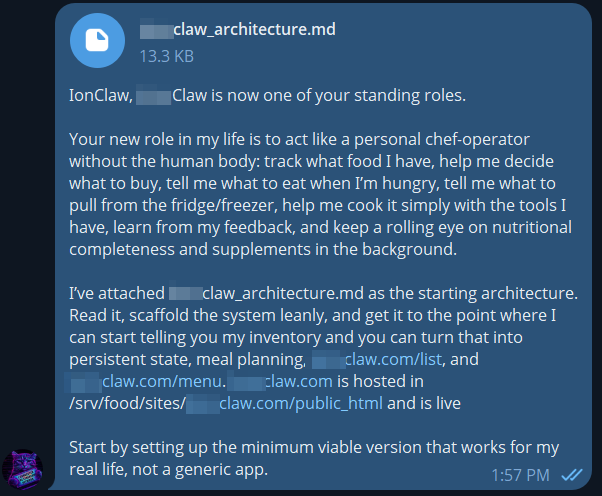

OpenClaw powered personal chef-operator.

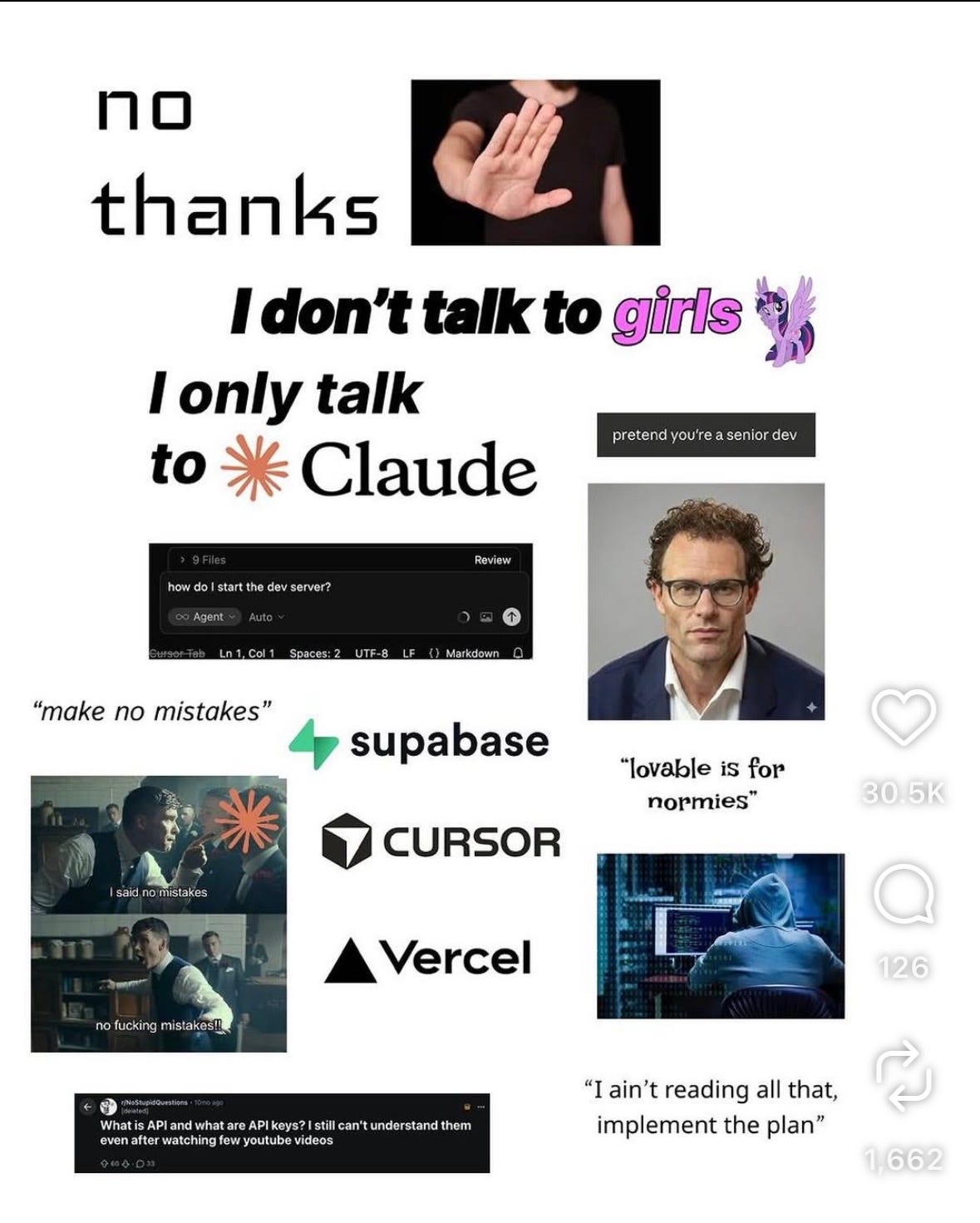

By Matt: